The etcd project, developed by the team at CoreOS, is a lightweight, distributed key-value store that can be configured to span across multiple nodes. One of the fundamental components that Kubernetes needs to function is a globally available configuration store.

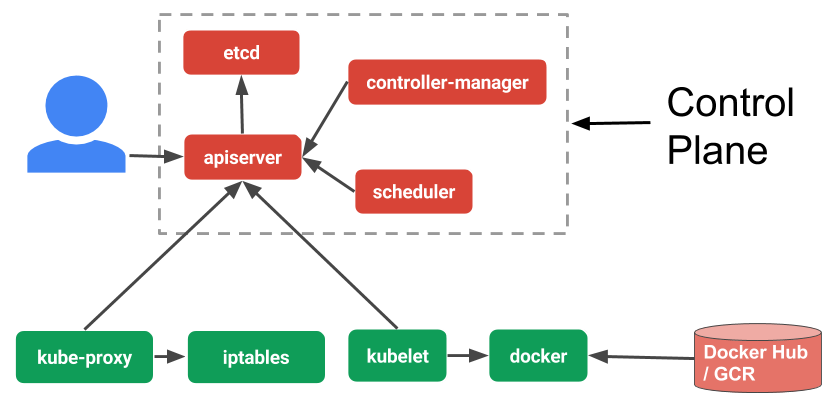

We will take a look at each of the individual components associated with master servers in this section. These components can be installed on a single machine or distributed across multiple servers. Overall, the components on the master server work together to accept user requests, determine the best ways to schedule workload containers, authenticate clients and nodes, adjust cluster-wide networking, and manage scaling and health checking responsibilities. It serves as the main contact point for administrators and users, and also provides many cluster-wide systems for the relatively unsophisticated worker nodes. Master Server ComponentsĪs we described above, the master server acts as the primary control plane for Kubernetes clusters. This group of user-defined applications running according to a specified plan represents Kubernetes’ final layer.

#What is kubernetes etcd how to

The master server then takes the plan and figures out how to run it on the infrastructure by examining the requirements and the current state of the system. To start up an application or service, a declarative plan is submitted in JSON or YAML defining what to create and how it should be managed. Users interact with the cluster by communicating with the main API server either directly or with clients and libraries. The underlying components make sure that the desired state of the applications matches the actual state of the cluster. The node receives work instructions from the master server and creates or destroys containers accordingly, adjusting networking rules to route and forward traffic appropriately.Īs mentioned above, the applications and services themselves are run on the cluster within containers. To help with isolation, management, and flexibility, Kubernetes runs applications and services in containers, so each node needs to be equipped with a container runtime (like Docker or rkt). The other machines in the cluster are designated as nodes: servers responsible for accepting and running workloads using local and external resources. The master server acts as the primary point of contact with the cluster and is responsible for most of the centralized logic Kubernetes provides. This server acts as a gateway and brain for the cluster by exposing an API for users and clients, health checking other servers, deciding how best to split up and assign work (known as “scheduling”), and orchestrating communication between other components. One server (or a small group in highly available deployments) functions as the master server. The machines in the cluster are each given a role within the Kubernetes ecosystem. This cluster is the physical platform where all Kubernetes components, capabilities, and workloads are configured. Kubernetes can be visualized as a system built in layers, with each higher layer abstracting the complexity found in the lower levels.Īt its base, Kubernetes brings together individual physical or virtual machines into a cluster using a shared network to communicate between each server. To understand how Kubernetes is able to provide these capabilities, it is helpful to get a sense of how it is designed and organized at a high level.

Kubernetes provides interfaces and composable platform primitives that allow you to define and manage your applications with high degrees of flexibility, power, and reliability. You can scale your services up or down, perform graceful rolling updates, and switch traffic between different versions of your applications to test features or rollback problematic deployments. It is a platform designed to completely manage the life cycle of containerized applications and services using methods that provide predictability, scalability, and high availability.Īs a Kubernetes user, you can define how your applications should run and the ways they should be able to interact with other applications or the outside world. Kubernetes, at its basic level, is a system for running and coordinating containerized applications across a cluster of machines. We will talk about the architecture of the system, the problems it solves, and the model that it uses to handle containerized deployments and scaling. In this guide, we’ll discuss some of Kubernetes’ basic concepts. It aims to provide better ways of managing related, distributed components and services across varied infrastructure. Kubernetes is a powerful open-source system, initially developed by Google, for managing containerized applications in a clustered environment.